My company recently purchased a pair of Nexus 9508 Chassis, and I chose line cards N9K-9564PX as the default line cards for these chassis. In this blog post, I will talk about what we have run into with these line cards in the first 30 days in production, and what we have done (with TAC help) to resolve it.

Intro

Let's start with my selection. Cisco has 2 line cards for Nexus 9508 that provides 48-port 1 and 10 GE SFP+, N9K-9564PX and N9K-9464PX. The 9500 model support VXLAN. That's was the main reason we decided to go with 9564PX.

The Problem

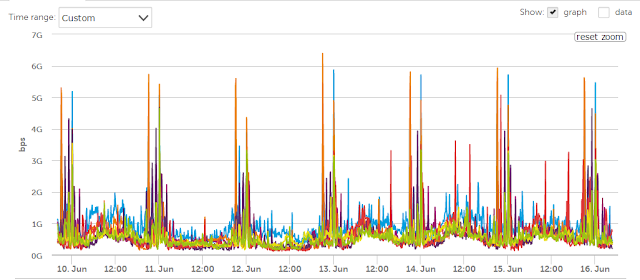

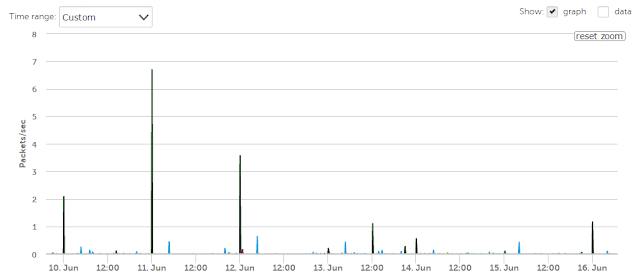

Within the first 30 days of deployment, I have notice that both chassis have a lot of output discards, while the port utilization is fairly low. Below is a sample of our traffic between June 10th and June 16th for the Top 10 interfaces on one chassis, and top 10 interfaces with output discards

|

| Top 10 Total Bandwidth |

|

| Top 10 Interface with Output Discard - Display in packet/seconds |

The Buffer

Before we go into the solution, let's visit the buffer structure on these line cards. Cisco published a white paper on Cisco Nexus 9500 Series Switches Buffer and Queuing Architecture (hyperlink). Please feel free to read it if you're interested. In short, the 9500 line card has 2 buffers: Network Forwarding Engine (NFE) and Application Leaf Engine (ALE).

The NFE is a 12-MB buffer, shared among all active ports for both ingress and egress direction. Once the NFE is filled, the additional packets will overflow to the ALE's hairpin buffer.

The ALE is a 40-MB buffer, that got split into 3 regions:

- 10 MB for direct ingress traffic from the ALE to fabric modules (backplane).

- 20 MB for direct egress traffic from fabric modules to ALE.

- 10 MB for hairpin traffic - traffic between 2 ports on the same line cards. This is consider an additional 10 MB for NFE

The Solution

I'm sure by now you have figure out what we did to resolve our issue. We have changed our buffer from "Mesh Optimized" to "Burst Optimized" and that seem to take care of our burst traffic. We still have minor discards (1 or 2 over the course of 2 days), but to me, that is still acceptable. In addition, we still have "Ultra Burst" option available. Below are the very few commands to change buffer on these line cards

show hardware qos ns-buffer-profile

hardware qos ns-buffer-profile {burst|mesh|ultra-burst}

Nice post. What software are you using for traffic stats?

ReplyDeleteThank you. We use LogicMonitor as our monitoring tool

DeleteThanks Hieu! I'll share this with the rest of my team!

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteHello does this command required a reboot or does it impact service

ReplyDeleteThis is the great post and I hope more different ideas from your post. Really I enjoy to visit your post and keep posting...

ReplyDeletePega Training in Chennai

Pega Course in Chennai

Primavera Training in Chennai

Unix Training in Chennai

Excel Training in Chennai

Corporate Training in Chennai

Embedded System Course Chennai

Linux Training in Chennai

Microburst/Burst Traffic:

DeleteMicrobursts or burst traffic refer to sudden, short-lived spikes in network traffic volume exceeding the average baseline. These bursts can overwhelm network resources and lead to packet loss, latency spikes, and performance degradation.

Cisco Nexus 9508 with N9K-9564PX Linecard:

The Cisco Nexus 9500 series switches, including the 9508 with the N9K-9564PX linecard, utilize a two-stage buffer architecture:

Network Interface Engine (NFE): Provides a smaller, high-performance buffer for initial packet reception.

Adaptive Line Rate Engine (ALE) or Northstar Buffer: Offers a larger, shared buffer for absorbing traffic fluctuations and preventing packet loss.

ALE/Northstar Buffer and Microbursts:

The ALE/Northstar buffer plays a crucial role in handling microbursts and burst traffic. Here's how:

Networking Projects For Final Year

Buffer Absorption: During microbursts, the ALE/Northstar buffer absorbs the excess traffic, preventing it from overwhelming the NFE buffers and causing packet loss.

Buffer Sharing: The ALE/Northstar buffer utilizes dynamic allocation, allowing it to share resources efficiently between all ports on the linecard. This ensures adequate buffer availability even for uneven traffic patterns across ports.

Network Security Projects For Final Year Students

Information Security Projects For Final Year Students

ReplyDeleteAll are saying the same thing repeatedly, but in your blog I had a chance to get some useful and unique information, I love your writing style very much, I would like to suggest your blog in my dude circle, so keep on updates.

AWS Training in Chennai

AWS Course in Chennai

Big Data Training in Chennai

Web Designing Course in chennai

PHP Training in Chennai

AWS Training in Porur

AWS Training in Tambaram

Excellent information with unique content and it is very useful to know about the information based on blogs..

ReplyDeletePHP Training in Chennai

PHP Training in Bangalore

PHP Training in Coimbatore

PHP Course in Madurai

PHP Course in Bangalore

PHP Training Institute in Bangalore

PHP Classes in Bangalore

Best PHP Training Institute in Bangalore

AWS Training in Bangalore

Data Science Courses in Bangalore

I should thank you for the efforts you've got put in penning this website online. I am hoping to see the identical high-grade weblog posts from you afterward as properly. In reality, info your creative writing capabilities has stimulated me to get my own, personal weblog now ;)

ReplyDeleteIt is so good to read a new article.

ReplyDeletedigital marketing interview questions and answers

hadoop interview questions and answers

Thanks for sharing this blog. It was so informative.

ReplyDeleteWhere do you see yourself after 5 years

Today question

Thanks for sharing this blog. It was so informative.

ReplyDeleteOnline tally course

Tally classes near me

Thanks for sharing this blog. It was so informative.

ReplyDeletePython training institute in chennai

Python institute in chennai

Hello, in my comany we have the same issue with output discards, we have been to try to solve it, with the same commands in this post but still we have the issue, some thoughts? it could be that some output discards are normal behavior?

ReplyDeleteGreat post. Thanks for posting this blog.

ReplyDeleteCCNA course in Pune